Blog

CATEGORY: Best practice

Email A/B Testing Best Practices

A/B testing, also known as split testing, is a method of comparing two versions of an email (A and B) to see which one performs better. The A/B testing approach can help you to optimise your email marketing campaigns, such as the subject line, preview text, email content and calls to action.

In this blog, we will run through the process of setting up an A/B test, some do’s and don'ts as well as some examples.

Setting up an A/B test

Define the tests' objective

Before you begin, it is important to decide what the objective of your test is and what you aim to achieve. For example, is it to help improve your open rates or maybe to improve your CTR on a particular call to action.

Select the variable you wish to test

Your variable is the element of the campaigns that will differentiate to allow you to see which one best helped to achieve your objective. It is important you only choose one variable to change, otherwise you will not know which change caused the result.

Some examples of variables may include:

Your subject line and preview text

The email content

Your main call to action (e.g. the text within a button or its colour)

Send time

Use of personalisation

Day or time of send

Creating the email variations

Once you know the variable for the campaign, you can begin creating your email designs. Depending on your variable, you may only need one email, for others, you will need two separate versions.

Segment your audience

Once your content is ready, you can split your audience into two separate audiences, one will receive split A, the other split B.

Please note, if you’re an e-shot customer, you can skip this step as the ‘Split test’ campaign type automatically splits your selected audience into two splits and allows you to choose the % of each as shown below.

Run your test

At this stage you can schedule both your A and B split and wait for the results.

Collect your results and determine the split winner

Once some time has passed and contacts have had the opportunity to interact with the email, you can view the results and determine which split is your winner. We would recommend at least 24 hours before making a decision to allow your recipients time to interact.

If you’re an e-shot customer, you can then send your winning split to the remaining % of contacts in your audience.

Implement your findings

After determining your split winner, you can implement that winning split into your future email campaigns and repeat the process if need be.

Tips, do’s and don'ts

Start with a hypothesis: For example, “Our audience engagement rate is higher for emails sent on a Thursday morning than Friday afternoon”, then test your hypothesis.

Test one variable at a time: This ensures you know which change impacted your results e.g. if you change your subject line and time of send, you won't know which one caused the winning split to win.

Ensure a large enough sample size: Small samples may not provide you with the results you need to determine a winner. The larger the sample size the better. If you only have a smaller sample repeat the test hypothesis multiple times to get a more statistically viable output.

Run tests for an appropriate duration: Allow enough time to gather meaningful data. If you review results the next morning after sending the campaign at 6pm the night before, that isn't enough time for all contacts to have the chance to interact with the email. We recommend that you wait at least 24-48hrs before reviewing results.

Use a reliable email marketing tool: Using a tool such as e-shot that has a built in A/B testing campaign tool, helps to make the process much smoother and easier to run your tests.

Set a timeline: As per the above steps, it is important to construct a plan for your A/B split tests before sending them out, your timeline and steps may vary to the above as that is just a rough guide.

Sending both splits at the same time: Unless your variable is the send time of the email, it is important you send both splits at the same time, as the send time is another factor that can affect the success of your email campaigns.

Adopt a routine for testing variables to allow for continuous improvement: e-shot allows you to test an abundance of variables, so don’t feel constricted to only testing one or two. As long as your target group is big enough to give you viable results, the more variables you test, the better! Doing so will give you best results as to what affects your email campaign results.

Understand the variables you are testing: Be clear what metrics you want to assess and therefore what variables will need to be changed. After establishing this, it is also important to understand what criteria will be used to gauge the success of your campaigns, will it be total displays, total clicks or clicks through on a particular link for example.

Finally, and arguably the two most important tips to note.

Apply your discoveries to other email campaigns: After you have performed your A/B split testing research, ensure you apply this throughout your email campaigns, to ensure you get the best results throughout! You will have gained valuable research into what works best for your audiences, so don't ignore that, the chances are, repeating the same for another campaign will bring you similar results.

Test frequently: Once you have found what works best for you and your email campaigns, don’t assume your results are permanent. Your audience will constantly be evolving and so your email campaigns need to with them. One of the reasons why A/B testing is so valuable is due to the ever-changing landscape of email marketing. Your email strategy and campaigns need to keep up with this and A/B testing regularly can help you to stay on the top of your game!

A/B split test examples for the Local Gov Authorities and the NHS

Here are a few examples of A/B testing that could be used by Local Gov Authorities and the NHS. These examples are a rough guide and should be slightly altered to better suit yourselves if you are to use them as a guide.

Example 1: Subject lines

Objective: Improve the open rate for your monthly newsletter

Test: Compare two different subject lines

Version A: ‘June Newsletter - Important Updates in our community’

Version B: ‘Discover What's New in June for [City Name] Residents’

Measurement of success: Open rates

Example 2: Email content

Objective: Increase engagement throughout the email

Test: Compare use of different email content e.g. use of shorter vs longer text

Version A: Newsletter which contains very little text under each article

Version B: Newsletter which contains a paragraph or two under each article

Measurement of success: Click throughs on links / are links further down the email being clicked

Example 3: Calls to action

Objective: Boost registrations for an event

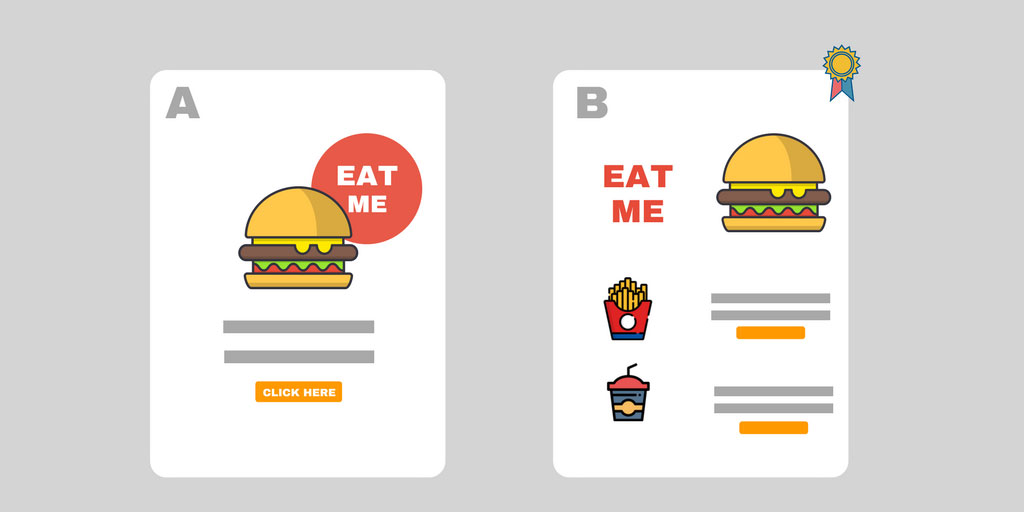

Test: Compare use of different calls to action in an email

Version A: CTA button with the text ‘Sign up now’

Version B: CTA button with the text ‘Secure your place at X event’

Measurement of success: Which CTA to same webpage received the most click throughs

Example 4: Preventative health screenings

Objective: Increase the uptake of preventive health screenings

Test: Use of different subject lines and preview text

Version A: Subject line: ‘Time for your Annual Health Screening’. Preview text: ‘Book now’

Version B: Subject line: ‘Stay healthy - Schedule your Screening Today’. Preview text: Secure you place before appointments are filled’

Measurement of success: Which campaign received the most opens and subsequently the most screening test sign ups.

Solutions

Email marketing healthcheck

We are confident that we can help you, which is why we offer a free healthcheck to identify potential issues with your current programme and free advice on things that could be done to improve it.